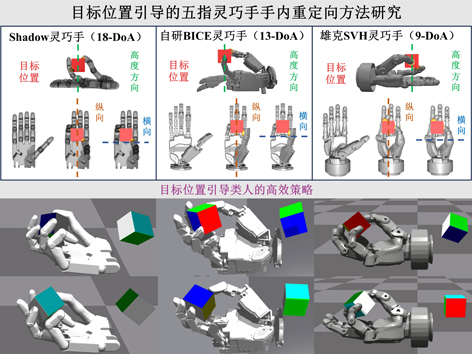

Target Position-guided In-hand Re-orientation for Five-fingered Dexterous Hands

| Citation: | ZHANG Lingjun, TANG Liang, LIU Lei. Target Position-guided In-hand Re-orientation for Five-fingered Dexterous Hands[J]. ROBOT, 2025, 47(1): 10-21. DOI: 10.13973/j.cnki.robot.240019 |

Target Position-guided In-hand Re-orientation for Five-fingered Dexterous Hands

-

Abstract

Re-orientation involves rotating an object to a target configuration, with the most challenging case being the rotation from an arbitrary initial configuration to an arbitrary target configuration. To address the challenge of efficiently performing in-hand re-orientation tasks in a more human-like manner by guiding anthropomorphic five-fingered dexterous hands with different degrees of actuation (DoA), a target position-guided in-hand object re-orientation policy generation method is proposed. Firstly, a feasible principle for designing target positions is proposed, inspired by the operation characteristics of human hands during in-hand re-orientation and based on the distribution characteristics of DoA in anthropomorphic five-fingered dexterous hands. The difference between the actual and target positions of the object during re-orientation process is utilized as a component of the immediate reward to guide anthropomorphic five-fingered dexterous hands in maintaining the object near the target. Secondly, a method is developed inspired by the preparatory states of human hands before performing re-orientation tasks, to sample the joint positions of anthropomorphic five-fingered dexterous hands when resetting the state everytime, aiming to enhance manipulation capabilities. Finally, the re-orientation policy is trained using the proximal policy optimization (PPO) algorithm based on the long short-term memory (LSTM) network and asymmetric actor-critic architecture. Simulation results show that the proposed method enables the 9-DoA Schunk SVH dexterous hand, the 13-DoA BICE dexterous hand developed by Beijing Institute of Control Engineering (BICE), and the 18-DoA Shadow dexterous hand to approach the predefined maximum number of consecutive successes when performing re-orientation tasks. Moreover, compared with in-hand object re-orientation policy generation method without target position guidance, the proposed method significantly reduces the average number of steps required to perform re-orientation tasks. The proposed method enables anthropomorphic five-fingered dexterous hands with different DoA to efficiently perform object re-orientation tasks in a human-like manner through coordinated action of the palm and fingers, significantly enhancing operational efficiency.Keywords:

-

References

[1] BICCHI A. Hands for dexterous manipulation and robust grasping: A difficult road toward simplicity[J]. IEEE Transactions on Robotics and Automation, 2000, 16(6): 652-662. doi: 10.1109/70.897777[2] KUMAR V, TODOROV E, LEVINE S. Optimal control with learned local models: Application to dexterous manipulation[C]// IEEE International Conference on Robotics and Automation. Piscataway, USA: IEEE, 2016: 378-383. doi: 10.1109/ICRA.2016.7487156[3] RAJESWARAN A, KUMAR V, GUPTA A, et al. Learning complex dexterous manipulation with deep reinforcement learning and demonstrations[C]//Robotics: Science and Systems XIV. 2018. doi: 10.15607/RSS.2018.XIV.049[4] NAGABANDI A, KONOLIGE K, LEVINE S, et al. Deep dynamics models for learning dexterous manipulation[C]//Proceedings of the Conference on Robot Learning. 2020: 1101-1112. https://proceedings.mlr.press/v100/nagabandi20a.html[5] ANDRYCHOWICZ M, BAKER B, CHOCIEJ M, et al. Learning dexterous in-hand manipulation[J]. International Journal of Robotics Research, 2020, 39(1): 3-20. doi: 10.1177/0278364919887447[6] CHEN T, XU J, AGRAWAL P. A system for general in-hand object re-orientation[C]//Proceedings of the 5th Conference on Robot Learning. 2022: 297-307. https://proceedings.mlr.press/v164/chen22a.html[7] CHEN T, TIPPUR M, WU S, et al. Visual dexterity: In-hand re-orientation of novel and complex object shapes[J]. Science Robotics, 2023, 8(84). doi: 10.1126/scirobotics.adc9244[8] HUANG W, MORDATCH I, ABBEEL P, et al. Generalization in dexterous manipulation via geometry-aware multi-task learning[DB/OL]. (2021-11-04) [2024-01-02]. doi: 10.48550/arXiv.2111.03062[9] PETRENKO A, ALLSHIRE A, STATE G, et al. DexPBT: Scaling up dexterous manipulation for hand-arm systems with population based training[C]//Robotics: Science and Systems XIX. 2023. doi: 10.15607/RSS.2023.XIX.037[10] MA Y J, LIANG W, WANG G, et al. Eureka: Human-level reward design via coding large language models][DB/OL]. (2023-10-06) [2024-03-28]. https://arxiv.org/abs/2310.12931[11] KHANDATE G, HAAS-HEGER M, CIOCARLIE M. On the feasibility of learning finger-gaiting in-hand manipulation with intrinsic sensing[C]//International Conference on Robotics and Automation. Piscataway, USA: IEEE, 2022: 2752-2758. doi: 10.1109/ICRA46639.2022.9812212[12] KHANDATE G, SHANG S, CHANG E T, et al. Sampling-based exploration for reinforcement learning of dexterous manipulation[DB/OL]. (2023-05-23) [2024-01-20]. https://arxiv.org/abs/2303.03486[13] XU Y Z, WAN W K, ZHANG J L, et al. UniDexGrasp: Universal robotic dexterous grasping via learning diverse proposal generation and goal-conditioned policy[C]//IEEE/CVF Conference on Computer Vision and Pattern Recognition. Piscataway, USA: IEEE, 2023: 4737-4746.[14] Shadow Robot Company. Shadow robot dexterous hand] [EB /OL]. (2011-07-25) [2023-12-18]. https://www.shadowrobot.com/dexterous-hand-series/[15] Schunk SE & Co. KG. SVH 5-finger hand][EB/OL]. (2022-12-01) [2023-12-19]. http://wiki.ros.org/schunk_svh_driver[16] SCHULMAN J, WOLSKI F, DHARIWAL P, et al. Proximal policy optimization algorithms[DB/OL]. (2017-08-28) [2023-12-19]. doi: 10.48550/arXiv.1707.06347[17] HOCHREITER S, SCHMIDHUBER J. Long short-term memory][J]. Neural Computation, 1997, 9(8): 1735-1780. doi: 10.1162/neco.1997.9.8.1735[18] MAKOVIYCHUK V, WAWRZYNIAK L, GUO Y, et al. Isaac Gym: High performance GPU-based physics simulation for robot learning[DB/OL]. (2021-08-24)[2023-12-19]. https://arxiv.org/abs/2108.10470[19] NAIR V, HINTON G E. Rectified linear units improve restricted Boltzmann machines][C]//International Conference on Machine Learning. 2010: 807-814. https://api.semanticscholar.org/CorpusID: 15539264 -

Related Articles

Robotic Grasping Technology Based on Shape Analysis and Probabilistic Reasoning

School of Computer Science and Engineering, South China University of Technology, Guangzhou 510006, China

-

-

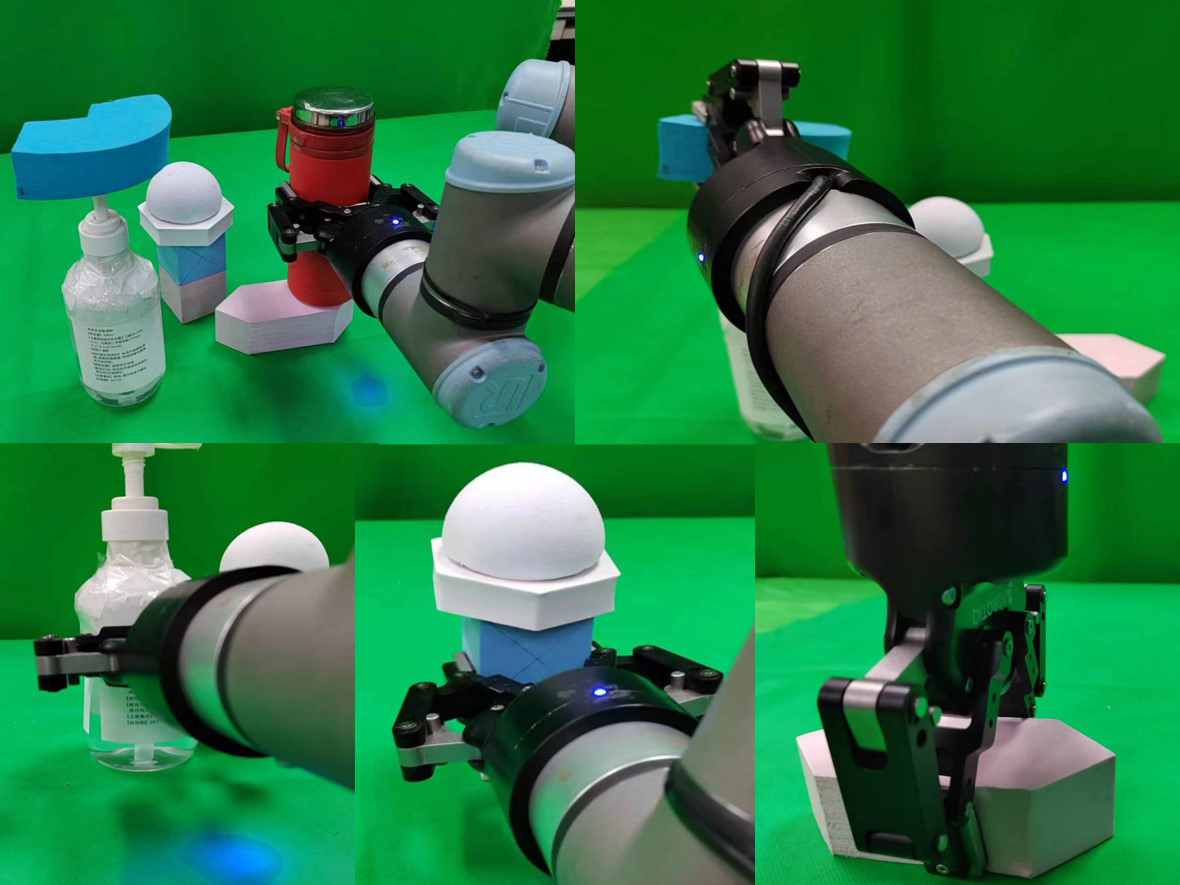

Abstract

In the task of grasping irregular objects, the transported objects may shake and fall off due to their complex and diverse shapes and structures. For these issues, a robotic grasping technology based on shape analysis and probabilistic reasoning is proposed. Firstly, the dispersivity and flatness of the object’s point cloud are analyzed to generate a set of candidate grasping poses. Then, the factors influencing the shaking and falling off of the object are qualitatively analyzed in the simulation scenario, and the number of successful grasping and rotation-translation experiments is statistically counted in the simulation. The stability of the grasp pose is quantitatively analyzed using the conditional expectation method, and a PointNet discriminator is trained to evaluate and rank the candidate grasp poses. The grasping is ultimately completed with the optimal grasp pose. The experimental results indicate that the proposed method can solve the issue of shaking and falling off of irregular objects during the grasping and transporting process. Compared with the benchmark method, the average grasping success rate is improved to 89.2%, an increase of 2.6%, and the average transportation stability is enhanced to 84.2%, an increase of 22.7%. The proposed method enables intelligent grasping of objects in multi-object stacking scenarios, ensuring stability during the grasping and transporting process, and establishing a logical sequence for grasping.Keywords:

-

References

[1] 陈淑婷. 中国工业机器人产业创新网络演化研究[D]. 广州: 广州大学, 2022. doi: 10.27040/d.cnki.ggzdu.2022.000652CHEN S T. The research on innovation network evolution of Chinese industrial robot industry[D]. Guangzhou: Guangzhou University, 2022. doi: 10.27040/d.cnki.ggzdu.2022.000652[2] 潘静楠. 人口年龄结构老化、劳动力流动与机器换人[D]. 杭州: 浙江大学, 2022. doi: 10.27461/d.cnki.gzjdx.2022.000490PAN J N. Aging of population age structure, labor migration and robot replacement[D]. Hangzhou: Zhejiang University, 2022. doi: 10.27461/d.cnki.gzjdx.2022.000490[3] 刘亚欣, 王斯瑶, 姚玉峰, 等. 机器人抓取检测技术的研究现状[J]. 控制与决策, 2020, 35(12): 2817-2828. doi: 10.13195/j.kzyjc.2019.1145LIU Y X, WANG S Y, YAO Y F, et al. Recent researches on robot autonomous grasp technology[J]. Control and Decision, 2020, 35(12): 2817-2828. doi: 10.13195/j.kzyjc.2019.1145[4] ZHANG H B, TANG J, SUN S G, et al. Robotic grasping from classical to modern: A survey[DB/OL]. [2024-02-01]. https://arxiv.org/abs/2202.03631[5] DENG Z, JONETZKO Y, ZHANG L, et al. Grasping force control of multi-fingered robotic hands through tactile sensing for object stabilization[J]. Sensors, 2020, 20(4). doi: 10.3390/s20041050[6] MATAK M, HERMANS T. Planning visual-tactile precision grasps via complementary use of vision and touch[J]. IEEE Robotics and Automation Letters, 2023, 8(2): 768-775. doi: 10.1109/LRA.2022.3231520[7] SIDDIQUI M S, COPPOLA C, SOLAK G, et al. Grasp stability prediction for a dexterous robotic hand combining depth vision and haptic Bayesian exploration[J]. Frontiers in Robotics and AI, 2021, 8. doi: 10.3389/frobt.2021.703869[8] CHEN M Q, LI S D, SHUANG F, et al. Development of a three-fingered multi-modality dexterous hand with integrated embedded high-dimensional sensors[J]. Journal of Intelligent & Robotic Systems, 2023, 108. doi: 10.1007/s10846-023-01875-6[9] XIE Z, LIANG X, ROBERTO C. Learning-based robotic grasping: A review[J]. Frontiers in Robotics and AI, 2023, 10. doi: 10.3389/frobt.2023.1038658[10] DU G G, WANG K, LIAN S G, et al. Vision-based robotic grasping from object localization, object pose estimation to grasp estimation for parallel grippers: A review[J]. Artificial Intelligence Review, 2021, 54(3): 1677-1734. doi: 10.1007/s10462-020-09888-5[11] OUYANG W X, HUANG W H, MIN H S. Robot grasp with multi-object detection based on RGB-D image[C]// China Automation Congress. Piscataway, USA: IEEE, 2021: 6543-6548. doi: 10.1109/CAC53003.2021.9728678[12] ZHANG S T, GUO Z C, HUANG J, et al. Robotic grasping position of irregular object based Yolo algorithm[C]// International Conference on Automation, Control and Robotics Engineering. Piscataway, USA: IEEE, 2020: 642-646. doi: 10.1109/CACRE50138.2020.9229933[13] LIU D, TAO X T, YUAN L H, et al. Robotic objects detection and grasping in clutter based on cascaded deep convolutional neural network[J]. IEEE Transactions on Instrumentation and Measurement. 2022, 71. doi: 10.1109/TIM.2021.3129875[14] MAHLER J, LIANG J, NIYAZ S, et al. Dex-Net 2.0: Deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics[DB/OL]. (2017-08-08) [2024-02-01]. https://arxiv.org/abs/1703.09312.[15] LIANG H Z, MA X J, LI S, et al. PointNetGPD: Detecting grasp configurations from point sets[C]// International Conference on Robotics and Automation. Piscataway, USA: IEEE, 2019: 3629-3635. doi: 10.1109/ICRA.2019.8794435[16] DUAN H N, WANG P, HUANG Y Y, et al. Robotics dexterous grasping: The methods based on point cloud and deep learning[J]. Frontiers in Neurorobotics, 2021, 15. doi: 10.3389/fnbot.2021.658280[17] 邬金.论异形液体容器造型及其销售包装设计[D].苏州: 苏州大学, 2018. https://cdmd.cnki.com.cn/Article/CDMD-10285-1018146406.htmWU J. On the design of the shaped liquid containers and their sales packaging design[D]. Suzhou: Soochow University, 2018. https://cdmd.cnki.com.cn/Article/CDMD-10285-1018146406.htm[18] 朱枭. 基于多目视觉的异形瓶标签图像高速拼接系统研究[D]. 上海: 上海电机学院, 2023. doi: 10.27818/d.cnki.gshdj.2023.000110ZHU X. Fast image stitching system for irregular bottle based on multi-view stereo vision[D]. Shanghai: Shanghai Dianji University, 2023. doi: 10.27818/d.cnki.gshdj.2023.000110[19] MANUELLI L, GAO W, FLORENCE P, et al. KPAM: KeyPoint affordances for category-level robotic manipulation[C]// International Symposium of Robotics Research. Cham, Switzerland: Springer, 2022: 132-157. doi: 10.1007/978-3-030-95459-8_9[20] DONG H X, ZHOU J D, QIU C, et al. Robotic manipulations of cylinders and ellipsoids by ellipse detection with domain randomization[J]. IEEE/ASME Transactions on Mechatronics, 2023, 28(1): 302-313. doi: 10.1109/TMECH.2022.3193895[21] WEN B, LIAN W, BEKRIS K, et al. CaTGrasp: Learning category-level task-relevant grasping in clutter from simulation[C]// International Conference on Robotics and Automation. Piscataway, USA: IEEE, 2022: 6401-6408. doi: 10.1109/ICRA46639.2022.9811568[22] CHARLES R Q, HAO S, MO K, et al. PointNet: Deep learning on point sets for 3D classification and segmentation[C]// IEEE Conference of Computer Vision and Pattern Recognition. Piscataway, USA: IEEE, 2017: 77-85. doi: 10.1109/CVPR.2017.16[23] XIANG Y, SCHMIDT T, NARAYANAN V, et al. PoseCNN: A convolutional neural network for 6D object pose estimation in cluttered scenes[DB/OL]. (2018-05-26) [2024-02-01]. https://arxiv.org/abs/1711.00199.[24] TEN PAS A, GUALTIERI M, SAENKO K, et al. Grasp pose detection in point clouds[J]. International Journal of Robotics Research, 2017, 36(13-14): 1455-1473. doi: 10.1177/0278364917735594 -

Related Articles

-

Complete Guide to Temperature Sensors-GMTROBOT

Complete Guide to Temperature Sensors

1. Types & Physical Appearance

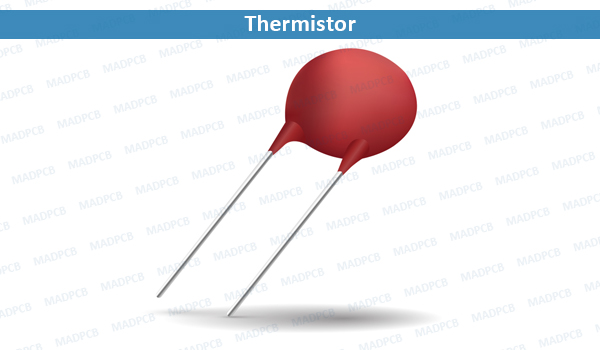

Thermistor

Small cylindrical package (1-5mm diameter), epoxy/glass coating

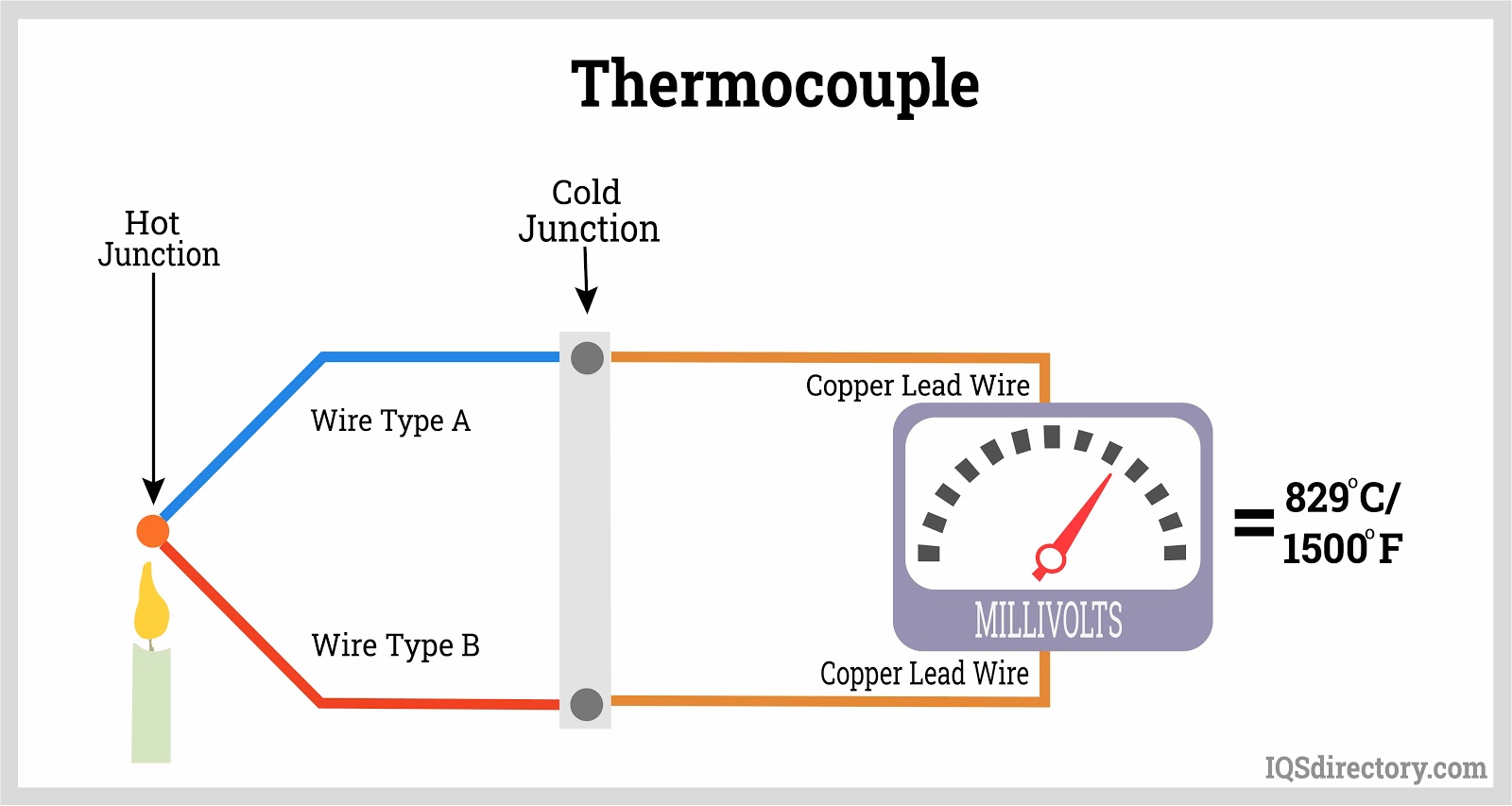

Thermocouple

Metal probe with two dissimilar metal wires

RTD (Pt100)

Stainless steel housing with platinum element

2. Internal Structure & Operation

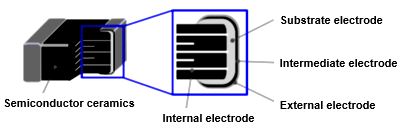

Thermistor Structure

Semiconductor ceramic core with silver electrodes

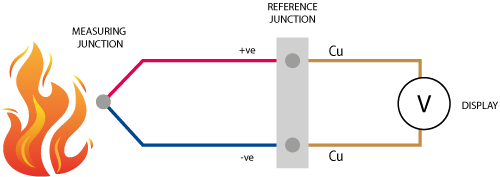

Thermocouple Working Principle

Seebeck effect diagram showing voltage generation

3. Typical Circuit Configurations

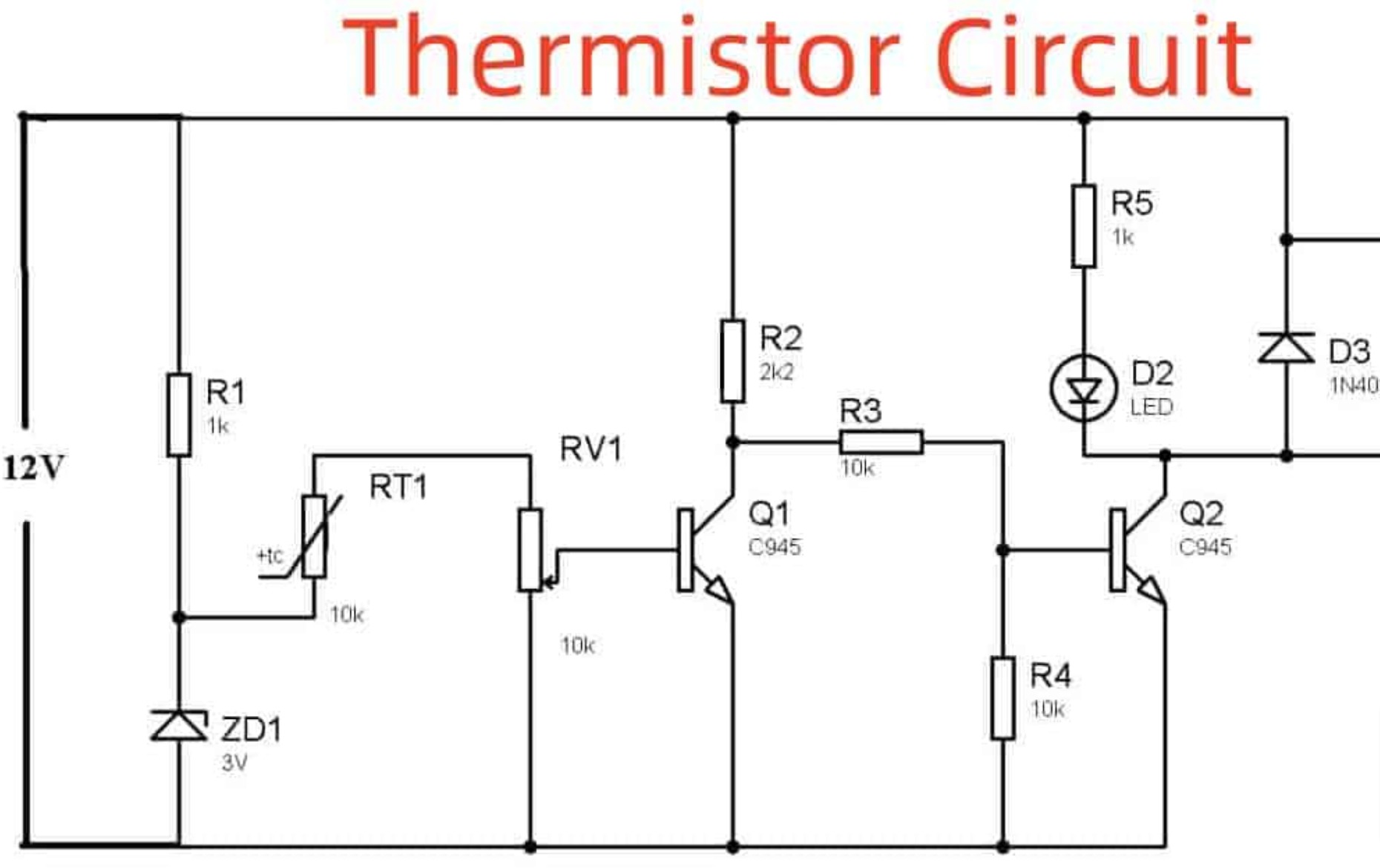

Thermistor Voltage Divider

+5V

|

|---Fixed Resistor---|

| |

|---NTC Thermistor---|

|

Vout

|

GND

DS18B20 Interface

DS18B20 | |--VDD (3-5V) |--DQ (Data with 4.7kΩ pull-up) |--GND

4. Industrial & Daily Life Applications

Factory automation temperature monitoring

Infrared forehead thermometer

Smart thermostat in home automation

What are the categories of sensors?

What are the categories of sensors?

First, I have to recall the basics of sensors, and different classification criteria may have different results. For example, according to the working principle, measured parameters, application fields and so on.

First of all, according to the measured parameters, the common should be temperature, pressure, light, and motion. Temperature sensors such as thermocouples and thermistors; The pressure sensor may be piezoelectric; Photoelectric sensor includes photoresistor, photodiode and so on. Motion sensors, accelerometers, gyroscopes, those are all. However, it is important to note that some sensors may belong to more than one category, for example, accelerometers may belong to both motion sensors and mechanical categories.

Then there is the principle of operation, which may involve more physical or chemical principles. Such as resistive, capacitive, inductive, these are based on changes in electrical characteristics. The piezoelectric type uses the piezoelectric effect, and the photoelectric type is based on the photoelectric effect. There are thermoelectric, magnetoelectric, chemical sensors, such as gas sensors, biosensors and so on. This part requires distinguishing the differences between different principles to avoid confusion.

When the output signal is classified, digital and analog should be the two main types. Digital sensors output discrete signals, such as switching or digitally coded signals; Analog sensors output continuous signals, such as changes in voltage or current. However, many sensors may now integrate analog-to-digital conversion, so the output may look digital, but it may still be analog in nature. This needs to be stated.

Application areas, industrial, medical, automotive, consumer electronics, environmental monitoring these are common. Each field may have specific sensor types, such as oxygen sensors in automobiles, tire pressure monitoring, blood sugar sensors in medical care, electrocardiogram electrodes, and so on. This section needs specific examples to make it easier for users to understand.

The classification of materials and structures may be slightly more complex, such as MEMS sensors are micro-electromechanical systems, which belong to miniaturized structures; Fiber optic sensors are based on optical materials. There are also flexible sensors, which may use polymers or other flexible materials. This section may need more examples to illustrate the characteristics of different materials and structures.

Energy is supplied by means of active and passive sensors. Active ones require an external power source, such as most electronic sensors; Passive ones provide their own energy, such as thermocouples that use temperature differences to generate electricity, or piezoelectric sensors that generate electric charges when they are stressed. Here it is necessary to clarify the difference between the two and typical examples.

Smart sensors are a rapidly developing direction in recent years, integrating processing power or communication functions, such as sensor nodes with microprocessors, or sensors in IoT devices. This part needs to emphasize the characteristics of intelligence, such as self-calibration, data processing, etc.

In addition, other classification methods may also be considered, such as contact and non-contact, or according to the use of the environment (high temperature, waterproof, etc.). However, it may be that users are mainly concerned with the first few categories, so it can be briefly mentioned.

You need to make sure that all categories are comprehensive and that there are no important categories left out. For example, a biosensor may belong to a chemical/biological class classified by measured parameters or operating principles, and it may be necessary to clarify its attribution. At the same time, whether there are emerging sensor types that need to be supplemented, such as quantum sensors or nanosensors, but this may belong to a more specialized field, and the basic classification may not need to be involved.

In summary, your answers should be clearly structured, listing the main types and examples under each classification, avoiding overly technical terms, and keeping them understandable. Also note the intersection between different categories, such as a sensor may belong to more than one category at the same time, can be appropriately stated. In this way, users can fully understand the different classification methods of sensors to meet the needs of different application scenarios.

There are many kinds of sensors and various classification methods. The following are the common classification methods and their main types:

1. By Measured Physical Quantity (Application Target)

- Temperature Sensors: Thermocouples, thermistors, infrared sensors.

- Pressure Sensors: Piezoelectric, piezoresistive, capacitive sensors.

- Light Sensors: Photoresistors, photodiodes, CCD/CMOS (image sensors).

- Motion Sensors: Accelerometers, gyroscopes, displacement sensors.

- Force/Torque Sensors: Load cells, torque sensors.

- Magnetic Sensors: Hall effect sensors, magnetoresistive sensors.

- Chemical Sensors: Gas sensors (e.g., CO₂, formaldehyde), pH sensors.

- Biological Sensors: Glucose sensors, DNA sensors, heart rate sensors.

- Humidity Sensors: Capacitive, resistive humidity sensors.

- Acoustic Sensors: Microphones, ultrasonic sensors.

2. By Working Principle

- Resistive Sensors: Detect via resistance changes (e.g., strain gauges, thermistors).

- Capacitive Sensors: Utilize capacitance changes (e.g., liquid level sensors, touchscreens).

- Inductive Sensors: Based on electromagnetic induction (e.g., proximity switches).

- Piezoelectric Sensors: Use piezoelectric effects (e.g., pressure/vibration detection).

- Photoelectric Sensors: Rely on photoelectric effects (e.g., photodiodes, fiber-optic sensors).

- Thermoelectric Sensors: Leverage thermoelectric effects (e.g., thermocouples).

- Magnetoelectric Sensors: Detect magnetic field changes (e.g., Hall sensors).

- Chemical/Biological Sensors: Use chemical reactions or bio-recognition (e.g., enzyme electrodes, gas sensors).

3. By Output Signal Type

- Analog Sensors: Output continuous signals (e.g., voltage, current).

- Digital Sensors: Output discrete signals (e.g., switch signals, digital codes).

- Pulse/Frequency Sensors: Generate pulse/frequency signals (e.g., tachometers).

4. By Power Supply

- Active Sensors: Require external power (e.g., most electronic sensors).

- Passive Sensors: Self-powered (e.g., thermocouples, piezoelectric sensors).

5. By Application Field

- Industrial Sensors: Pressure, flow, temperature sensors (for automation).

- Medical Sensors: Pulse oximeters, ECG electrodes, glucose monitors.

- Automotive Sensors: Oxygen sensors, tire pressure monitors, ADAS (radar, cameras).

- Environmental Sensors: PM2.5 detectors, water quality sensors, weather stations.

- Consumer Electronics Sensors: Gyroscopes in smartphones, fingerprint scanners, ambient light sensors.

6. By Material/Structure

- MEMS Sensors: Micro-electromechanical systems (e.g., accelerometers, microphones).

- Fiber-Optic Sensors: Use optical signals (e.g., temperature/strain detection).

- Flexible Sensors: Bendable materials (e.g., electronic skin, wearables).

7. By Intelligence Level

- Traditional Sensors: Output raw signals.

- Smart Sensors: Integrated signal processing, self-calibration, or communication (e.g., IoT sensors).

8. Other Special Classifications

- Contact vs. Non-contact: Contact temperature sensors vs. infrared sensors.

- Environmental Adaptability: Waterproof, explosion-proof, high-temperature-resistant sensors (e.g., industrial-grade).

Summary

Sensor classifications are not rigid—many overlap categories (e.g., a MEMS accelerometer is both a motion sensor and a digital sensor). Selection depends on measurement requirements, environment, accuracy, and cost.

What are the categories of sensors?

What are the categories of sensors?

There are many kinds of sensors and various classification methods. The following are the common classification methods and their main types:

1. By Measured Physical Quantity (Application Target)

- Temperature Sensors: Thermocouples, thermistors, infrared sensors.

- Pressure Sensors: Piezoelectric, piezoresistive, capacitive sensors.

- Light Sensors: Photoresistors, photodiodes, CCD/CMOS (image sensors).

- Motion Sensors: Accelerometers, gyroscopes, displacement sensors.

- Force/Torque Sensors: Load cells, torque sensors.

- Magnetic Sensors: Hall effect sensors, magnetoresistive sensors.

- Chemical Sensors: Gas sensors (e.g., CO₂, formaldehyde), pH sensors.

- Biological Sensors: Glucose sensors, DNA sensors, heart rate sensors.

- Humidity Sensors: Capacitive, resistive humidity sensors.

- Acoustic Sensors: Microphones, ultrasonic sensors.

2. By Working Principle

- Resistive Sensors: Detect via resistance changes (e.g., strain gauges, thermistors).

- Capacitive Sensors: Utilize capacitance changes (e.g., liquid level sensors, touchscreens).

- Inductive Sensors: Based on electromagnetic induction (e.g., proximity switches).

- Piezoelectric Sensors: Use piezoelectric effects (e.g., pressure/vibration detection).

- Photoelectric Sensors: Rely on photoelectric effects (e.g., photodiodes, fiber-optic sensors).

- Thermoelectric Sensors: Leverage thermoelectric effects (e.g., thermocouples).

- Magnetoelectric Sensors: Detect magnetic field changes (e.g., Hall sensors).

- Chemical/Biological Sensors: Use chemical reactions or bio-recognition (e.g., enzyme electrodes, gas sensors).

3. By Output Signal Type

- Analog Sensors: Output continuous signals (e.g., voltage, current).

- Digital Sensors: Output discrete signals (e.g., switch signals, digital codes).

- Pulse/Frequency Sensors: Generate pulse/frequency signals (e.g., tachometers).

4. By Power Supply

- Active Sensors: Require external power (e.g., most electronic sensors).

- Passive Sensors: Self-powered (e.g., thermocouples, piezoelectric sensors).

5. By Application Field

- Industrial Sensors: Pressure, flow, temperature sensors (for automation).

- Medical Sensors: Pulse oximeters, ECG electrodes, glucose monitors.

- Automotive Sensors: Oxygen sensors, tire pressure monitors, ADAS (radar, cameras).

- Environmental Sensors: PM2.5 detectors, water quality sensors, weather stations.

- Consumer Electronics Sensors: Gyroscopes in smartphones, fingerprint scanners, ambient light sensors.

6. By Material/Structure

- MEMS Sensors: Micro-electromechanical systems (e.g., accelerometers, microphones).

- Fiber-Optic Sensors: Use optical signals (e.g., temperature/strain detection).

- Flexible Sensors: Bendable materials (e.g., electronic skin, wearables).

7. By Intelligence Level

- Traditional Sensors: Output raw signals.

- Smart Sensors: Integrated signal processing, self-calibration, or communication (e.g., IoT sensors).

8. Other Special Classifications

- Contact vs. Non-contact: Contact temperature sensors vs. infrared sensors.

- Environmental Adaptability: Waterproof, explosion-proof, high-temperature-resistant sensors (e.g., industrial-grade).

Summary

Sensor classifications are not rigid—many overlap categories (e.g., a MEMS accelerometer is both a motion sensor and a digital sensor). Selection depends on measurement requirements, environment, accuracy, and cost.

传感器种类繁多,分类方式多样,以下为常见的分类方法及其主要类型:

1. 按被测物理量(应用目标)分类

- 温度传感器:热电偶、热敏电阻、红外传感器。

- 压力传感器:压电式、压阻式、电容式传感器。

- 光传感器:光敏电阻、光电二极管、CCD/CMOS(图像传感器)。

- 运动传感器:加速度计、陀螺仪、位移传感器。

- 力学传感器:力传感器、扭矩传感器、称重传感器。

- 磁传感器:霍尔传感器、磁阻传感器。

- 化学传感器:气体传感器(如CO₂、甲醛)、pH传感器。

- 生物传感器:血糖传感器、DNA传感器、心率传感器。

- 湿度传感器:电容式湿度传感器、电阻式湿度传感器。

- 声学传感器:麦克风(声音传感器)、超声波传感器。

2. 按工作原理分类

- 电阻式传感器:通过电阻变化检测(如应变片、热敏电阻)。

- 电容式传感器:利用电容变化(如液位传感器、触摸屏)。

- 电感式传感器:基于电磁感应(如接近开关)。

- 压电式传感器:利用压电效应(如压力、振动检测)。

- 光电式传感器:基于光电效应(如光敏二极管、光纤传感器)。

- 热电式传感器:利用热电效应(如热电偶)。

- 磁电式传感器:基于磁场变化(如霍尔传感器)。

- 化学/生物传感器:通过化学反应或生物识别(如酶电极、气体传感器)。

3. 按输出信号类型分类

- 模拟传感器:输出连续信号(如电压、电流)。

- 数字传感器:输出离散信号(如开关信号、数字编码)。

- 脉冲/频率传感器:输出脉冲或频率信号(如转速传感器)。

4. 按能源供应方式分类

- 有源传感器:需外部电源供电(如大多数电子传感器)。

- 无源传感器:无需外部电源,自供能(如热电偶、压电传感器)。

5. 按应用领域分类

- 工业传感器:压力、流量、温度传感器(用于自动化控制)。

- 医疗传感器:血氧仪、心电图电极、血糖仪。

- 汽车传感器:氧传感器、胎压监测、ADAS(雷达、摄像头)。

- 环境监测传感器:PM2.5检测、水质传感器、气象站。

- 消费电子传感器:手机中的陀螺仪、指纹识别、环境光传感器。

6. 按材料与结构分类

- MEMS传感器:微机电系统(如加速度计、麦克风)。

- 光纤传感器:基于光信号传输(如温度、应变检测)。

- 柔性传感器:可弯曲材料制成(如电子皮肤、可穿戴设备)。

7. 智能化程度分类

- 传统传感器:仅输出原始信号。

- 智能传感器:集成信号处理、自校准或通信功能(如IoT传感器)。

8. 其他特殊分类

- 接触式与非接触式:如温度传感器(接触) vs 红外传感器(非接触)。

- 环境适应性:防水、防爆、耐高温传感器(如工业防爆型)。

总结

传感器的分类并非绝对,同一传感器可能归属多个类别(例如MEMS加速度计既是运动传感器,又是数字传感器)。选择时需结合被测对象、环境条件、精度需求及成本等因素综合考虑。

1.4m industrial welding robot 2 m argon arc welding robot automatically becomes fully CNC Robots

1.4m industrial welding robot 2 m argon arc welding robot automatically becomes fully CNC Robots

product description:

Scope of work

1.4 m

Import or not

no

Applicable material

metal

Control mode

Auto

Electric current

Alternating current

Input voltage

380V

Principle of action

inverse

Cooling mode

Air cooling

Quality guarantee

1 year

The name of a product or commodity

Industrial welding robot

Applicable industry

Be common

Welding principle

Swing welding, fish pattern welding, automatic welding

Placement mode

Ground installation

Saleable land

The whole country

use

Automation is connected to various workpieces

Price description

Price: The display price of the goods in Ipurchasing, the specific transaction price may change due to the participation of the goods in activities, and may also change with the purchase quantity or the selected specifications. If the user and the merchant reach an agreement offline, the settlement price of the offline agreement shall prevail. If the user completes the online purchase on Ipurchasing, the final price of the order settlement page shall prevail.

Buying price: The activity price of the product to participate in the marketing campaign may also change with the purchase quantity or the selected specifications, and the final price is subject to the order settlement page.

Special note: The prices marked in the form of text or pictures in the product details page (including the main picture) may be the prices under the specific activity period, the specific price of the product is subject to the price of the order settlement page or the actual transaction price reached by you and the business after contact; If you find the price of the event or the event information is abnormal, it is recommended to consult the merchant before purchasing.

Kuafu humanoid robot debuted at Huawei Developer Conference, Robot 100ETF(159530) rose 2.67%

Kuafu humanoid robot debuted at Huawei Developer Conference, Robot 100ETF(159530) rose 2.67%

Robot 100ETF (159530) : Tracking the national Certificate robot industry Index, selecting listed companies whose business scope belongs to the robot industry as samples to reflect the market performance of the robot industry. As of 14:44 on June 26, 2024, the Robot 100ETF (159530) was up 2.67%.

On the news, the Huawei Developer Conference (HDC2024) was officially opened recently, and showed the outside world the generalization application potential of Kuafu humanoid robot equipped with a large model in industrial and home scenes. Kuafu humanoid robot is a phased achievement achieved since the strategic cooperation between Huawei Cloud and Leju Robot. Through the access of “Pangu Embodied intelligent large model”, the humanoid robot has been improved in intelligence and generalization ability, which is an important step for the intelligent development of humanoid robots in China.

Tianfeng Securities believes that the downstream market of humanoid robots is expected to expand, and under the ability of all sectors of society, with the upgrading of technology and the development of industrial form, humanoid robots are expected to penetrate into service, manufacturing and other application fields, and the market potential will accelerate the release.

Related products:

Robot 100ETF (159530) : Tracking the national Certificate robot industry Index, selecting listed companies whose business scope belongs to the robot industry as samples to reflect the market performance of the robot industry. As of 14:44 on June 26, 2024, the Robot 100ETF (159530) was up 2.67%.

Scan to add us on WeChat

Click to copy WeChat ID